Note: Below are some responses to frequently asked questions that have been posed to us by students, reviewers, and interested scholars. Of course, our responses reflect our views on the state of the field at this moment in time; they will be updated as new data become available. Please don't hesitate to email us if we can clarify anything further! - Paul Eastwick and Eli Finkel

PREDICTIVE VALIDITY OF IDEAL PARTNER PREFERENCES

What are “ideal partner preferences,” and how should they be related to romantic evaluations of partners?

Ideal partner preferences (also called “mate preferences” and “ideal standards”) are the qualities that people rate highly when thinking about their ideal romantic partner. Typically, researchers operationalize ideals with respect to traits, such as attractiveness or warmth or extraversion. I might say that I want a partner who is especially extraverted, whereas you might say you want a partner who is not especially extraverted.

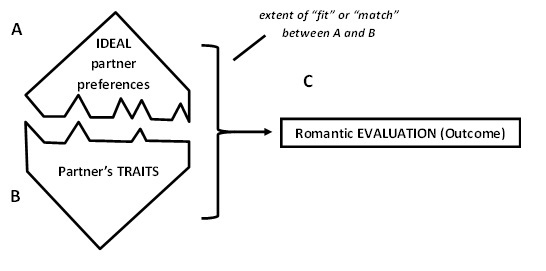

When we test the predictive validity of ideals, we are asking the question: Does the extent to which a partner matches my ideals predict how positively I evaluate him/her?

That is, does the extent of matching between (A) the participant’s ideals (e.g., desiring extraversion in a partner) and (B) the partner’s traits (e.g., the partner’s level of extraversion) predict (C) the participant’s evaluation of the partner (e.g., how much the participant loves the partner)? Answering this question typically requires measures of all three constructs, which we refer to as the ideal, the trait, and the evaluation, respectively.

How do you assess the extent to which someone’s ideals match the traits of a partner?

There are two ways to compute “match.” The first is the ideal × trait interaction, which captures the extent to which the fit between one ideal trait and the partner’s level of that trait predicts romantic evaluation, over and above the main effect of ideal and the main effect of trait alone. We have called this approach the level metric. The second approach is that, for each dyad in your sample, you can compute a correlation between a participant’s ideals and a partner’s traits across multiple traits; this approach captures the relative fit between a set of ideals and corresponding traits. We call this the pattern metric. These two metrics are independent (Cronbach, 1955, called them elevation and accuracy). You can use either metric to predict the romantic evaluation outcome.

Do the level metric and pattern metric matching indexes predict romantic evaluations?

For the level metric, the answer is consistently “no”. That is, ideal × trait interactions do not reliably predict romantic evaluations. This means that, if I say I really care about extraversion in a partner and you say you do not, extraversion tends to predict evaluations of romantic partners about the same for both of us.

For the pattern metric, the answer is “yes, if people are evaluating a current romantic partner.” That is, to the extent that a current partner matches my pattern of ideals (regardless of level) across a variety of traits, I report more positive romantic evaluations about him/her. If people instead evaluate partners they aren’t currently dating, then the answer is again “no.” (The clearest demonstration of these effects is in Study 3 here as well as Study 4 here.)

Importantly, new evidence suggests that the pattern metric has some statistical shortcomings (see Statistical Critique #2 below), so take these findings with a grain of salt.

Is there a published resource with more detail?

Yes. Check out this manuscript .

SEX DIFFERENCES AND IDEAL PARTNER PREFERENCES

Men and women differ in some of their ideal partner preferences. How does your work on the predictive validity of ideals relate to that literature on sex differences?

Past research has shown sex differences in ideal partner preferences: For instance, men say they care more about attractiveness in a partner than do women, suggesting that attractiveness will “fit” men’s ideals more than women’s ideals, on average. But does partner attractiveness actually predict romantic evaluations more strongly for men than for women? That is, does the sex × attractiveness interaction predict romantic evaluations? This is a fascinating question; note that it is essentially the same as the level metric test, but with sex “standing in” as a measure of ideals.

So if men say they care about attractiveness in a partner more than women, we should see that attractiveness correlates with romantic evaluations more strongly for men than for women?

That’s the logic of the sex difference prediction as we see it, and most scholars draw from evolutionary perspectives to generate the same prediction (e.g., work by Meltzer and Li described below). But agreement on this point is not universal: Some scholars do not believe that attributes like attractiveness should exhibit sex differentiated effects on romantic evaluations (see here and here for our back and forth with David Schmitt on this issue).

What does the evidence show? Do men and women differ in how traits like attractiveness affect their romantic evaluations?

Our meta-analysis of 97 studies revealed no sex difference in the association of (a) attractiveness with romantic evaluations and (b) earning potential with romantic evaluations. A small handful of one-off studies purport to find these sex differences. But there is not a single direct replication of such a study or a pre-registered study showing these effects; until such studies emerge, it seems prudent to trust the meta-analysis as our best understanding of this phenomenon.

We have no empirical basis to comment on the predictive validity of other sex-differentiated traits (e.g., youth, status).

Is that just in a speed-dating context? What about other kinds of contexts?

Our conclusions extend well beyond speed-dating. Our meta-analysis included speed-dating studies, and confederate studies, and naïve participant-interaction studies, and people reporting on opposite-sex friends and acquaintances from their everyday lives, and people reporting on dating partners, and people reporting on marriage partners. Our meta-analysis involved tens of thousands of participants across a wide variety of paradigms.

However, there are reasons to think that these sex differences might emerge when people report on potential partners they have never met before (e.g., online dating website profiles). Our meta-analysis did not include these sorts of studies. We suspect that sex differences do emerge in these contexts, although there have been no large scale meta-analyses of this topic that have accounted for publication bias.

Why would you even include speed-dating in the meta-analysis? Isn’t it a particularly artificial setting?

If you have ever seen a speed-date (a real one, not this one), you will probably have seen two people discussing some pretty ordinary details about their lives (e.g., where they are from, what they do for a living and/or study). They meander through these topics while trying to find something in common. Having interactions like these 12 times in a row might not be your cup of tea, but it’s a relatively ordinary getting-acquainted interaction.

Is there a published resource with more detail?

Yes. Please check out “Section 1: Sex differences in Ideal Partner Preferences” in this manuscript.

CONCEPTUAL CRITIQUES

Conceptual critique #1: As the Rolling Stones clearly explained, “you can’t always get what you want.” Isn’t that what your data are showing?

No. The dependent variable (i.e., the participant’s evaluation of the partner) is not “get” but rather “like” or “desire” or “love.” If you say you “want” a trait in a partner, do you like or desire partners more to the extent that they have that trait? So a proper adaptation of the song title for the current framework would be “you can’t always desire what you want”. Not as lyrically compelling, we grant, but psychologically fascinating (if not downright bizarre).

Conceptual critique #2: Did you just rediscover that attitudes sometimes do and sometimes do not predict behavior?

No, because our dependent variables are typically evaluations (e.g., “how much do you love your partner?”), not behaviors. So in effect, the disconnect that we document is between two self-reported evaluations: I might say I want an extraverted partner (i.e., I evaluate the trait extraverted positively when considering an ideal romantic partner), but I do not desire a specific partner more to the extent he or she is extraverted (i.e., extraversion is not a “driver of liking” for me). No behavior required!

Conceptual critique #3: Mate choice is multi-determined. I might like one mate because he is attractive but another mate because he is intelligent. So of course the level metric approach can’t work, because it focuses on only one trait at a time.

On the one hand, we sometimes respond to this critique with “yes, exactly!” The multi-determinacy of mate choice makes it very challenging for the level metric to explain substantial amounts of variance in romantic evaluations. Indeed, we have found in our work that traits change the meaning of other traits in the context of a live potential romantic partner. In other words, Asch (1946) was probably right.

On the other hand, although this idea might seem intuitive now, we obviously didn’t know it all along: After all, there are many, many, published studies that examine traits one-at-a-time in mating/romantic contexts. For example, the entire literature on sex differences in mate preferences (e.g., men valuing attractiveness more than women) focuses on one trait at a time, and no one said that this literature was absurd because it tested hypotheses that focused on a single trait in isolation. So it may make sense to apply this critique consistently to all past work examining one trait at a time and say that going forward, researchers should always consider multiple traits. But it probably doesn’t make sense to apply this critique selectively to only some published studies and not others. (We have written about this issue here, too.)

STATISTICAL CRITIQUES

Statistical critique #1: People’s ideals correlate with their actual partner’s traits. That is, if I want an intelligent partner, my partner is more likely to be intelligent. Doesn’t this demonstrate that people are fulfilling their ideals by selecting partners who match their ideals?

Maybe. Or maybe it means that people’s ideals change to match the traits of their current partners (they do). Or it means that people’s ideals change to match the traits that members of one’s preferred sex generally possess (e.g., men report greater ideals for physical attractiveness because women are more attractive than men). Or it means that people tend to live near partners who match their ideals, and these are the partners that people happen to meet and start dating. Or it means that people move to areas with partners who match their ideals. All these explanations are interesting and plausible.

To put it another way: Findings that examine only the correlation between ideals and traits (e.g., Conroy-Beam & Buss, 2016; Campbell, Chin, & Stanton, 2016) omit evaluations (i.e., the crucial third variable—the DV—in the model depicted above). These perspectives assume that the partner was evaluated positively and therefore selected in favor of alternative partners. But the evaluations are not measured, and the alternative, unselected partners are not observed. Thus, in our view, there are myriad alternative explanations for these effects, and they cannot address our core question of “Do people positively evaluate romantic partners to the extent that those partners match their ideals?” These correlations could be interesting for a number of reasons, but it’s hard to know (without measuring additional variables) what causes them to emerge.

Statistical critique #2: Just use the pattern metric, then! It’s better than the level metric because it captures more traits.

We used to believe this…but now we don’t. The reason why we have changed our minds on the viability of the pattern metric is because of this paper by Wood and Furr (2015). These authors point out that similarity metrics (of which the pattern metric is one example) have embedded within them a large chunk of variance due to normative positivity. This “Normative Desirability Confound” means that the pattern metric might correlate with DVs like desire or satisfaction for a really boring reason: We like people who have positive traits.

There are ways of correcting the pattern metric for this concern. In our view, the whole prior literature using the pattern metric (including our own papers!) are inconclusive until the Wood and Furr (2015) critique can be addressed. More research is needed.

Statistical critique #3: Just ask people “to what extent does your partner match your ideals for attractiveness?” These items predict evaluations strongly.

These items (which we call “direct-estimation” items) dodge the pattern vs. level metric issue by simply asking participants to make the matching judgment themselves. We think participants answer these items by simply reporting the extent to which the partner possesses the trait. Indeed, a direct-estimation item like “to what extent does your partner match your ideals for attractiveness” correlates with the judgment “to what extent does your partner possess the trait attractiveness” at about r = .90 (Rodriguez, Hadden, & Knee, 2015). Correlations that high are a pretty strong indicator that you assessed the same construct twice.

So direct-estimation items probably predict romantic evaluations because perceptions of positive traits predict romantic evaluations. It probably doesn’t have anything to do with ideals.

Statistical critique #4: Don’t speed-daters exhibit a truncated range of traits? I don’t think unattractive people or people with poor earning potential go speed-dating.

This critique almost certainly does not apply to attractiveness. Consider these data from one of our speed-dating studies. Here, the speed-dating participants rated each other on physically attractive and sexy/hot on 1 (not at all) to 9 (extremely) scales. Here is a histogram of the average of these two items (N on the y axis; each speed-dater rated ~12 targets):

We might be missing some exceptionally attractive people, but otherwise, this looks pretty uniform.

In an interesting twist, consider what happens if you ask coders to rate photographs of these same people:

Now, it looks as if speed-daters are mostly unattractive people. But the reality is, this is what happens when you ask coders to rate photographs: they tend to be really harsh, and about 80-90% of the judgments fall below the midpoint of the scale. So this isn’t something about speed-dating…it’s something about how people judge photographs.

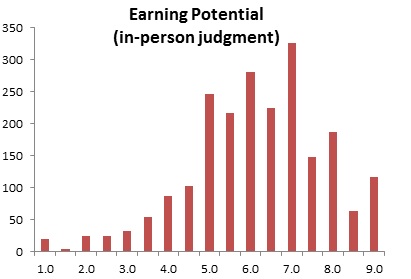

As for earning potential (an average of good career prospects and ambitious/driven), we’re open to this critique in the context of our collegiate speed-dating events:

Here, about 75% of people are above the midpoint of the scale.

One reason we’re not too concerned about this critique (broadly speaking) is that earning potential was not truncated in the studies in our meta-analysis. In fact, many of those studies were representative samples that incorporated people’s actual income as a predictor! So although this critique applies fairly to our speed-dating studies, the meta-analytic results reassure us that our results generalize to representative samples.

BRIEF SYNOPSES OF SPECIFIC CRITICAL PAPERS

Fletcher, G. J., Kerr, P. S., Li, N. P., & Valentine, K. A. (2014). Predicting romantic interest and decisions in the very early stages of mate selection standards, accuracy, and sex differences. Personality and Social Psychology Bulletin, 40, 540-550.

• What this paper shows: Direct-estimation items for several traits predict initial attraction very strongly.

• Why we don’t think you should be convinced (yet): As we discussed above (see statistical critique #3), direct-estimation items are really just a second measure of the trait. Of course positive traits are positively associated with romantic interest; standards/ideals are not relevant. Also, it is not persuasive (in our view) to use direct-estimation items to predict a dependent measure while controlling for the corresponding trait (a common practice). This is a method of attempting to demonstrate “incremental validity” that is known to be flawed (Westfall & Yarkoni, 2016).

• What would be convincing: For this study, it’s pretty easy. Westfall and Yarkoni (2016) explain how to conduct incremental validity analyses correctly with structural equation modeling. If (a) a structural equation model indicates that the direct-estimation items and the traits load on separate latent factors, and (b) these two latent factors both predict romantic interest, we would be persuaded that the direct-estimation items are measuring (and predicting) something important.

Schmitt, D. P. (2014). On the proper functions of human mate preference adaptations: comment on Eastwick, Luchies, Finkel, and Hunt (2014). Psychological Bulletin, 140, p. 666-672.

• What this paper shows: This paper offers a conceptual analysis arguing that “evolutionary psychologists do not expect that obtaining one’s ideal mate will simplistically, typically, or invariantly lead to higher relationship quality” (p. 668). The paper suggests that appropriate dependent measures would be those that are more tightly linked to ancestral reproductive success. Schmitt’s critique is largely confined to our discussion (and meta-analysis) of sex differences.

• Why we don’t think you should be convinced (yet): We argued in our reply that relationship quality outcomes indeed have relevance for ancestral reproductive success. After all, romantic evaluations are the primary drivers of people’s consequential relationship decisions, such as the decision to start dating someone or to break up with him/her. Thus, we have a hard time imagining how a sex difference in an ideal partner preference has any functional consequences if it doesn’t influence romantic evaluations.

We acknowledged that sex differences might reliably emerge for other types of outcomes (e.g., a woman’s attractiveness might positively predict her offspring’s health and survival more strongly than a man’s attractiveness). But there is no evidence of such sex differences, and so we characterized these ideas as “future testable hypotheses, not alternative explanations” (p. 675).

• What would be convincing: Demonstrations of Schmitt’s many testable hypotheses, which have yet to be empirically examined. Notably, Schmitt describes how his predictions about sex differences “would not be simple” and that “Relational effects likely would be contingent on a host of factors” (p. 668). The field’s standards for convincing evidence of effects that are moderated by a “host of factors” have gone up since 2014, and so to the extent that it is possible to accumulate such evidence, it may take a while for us to see it in the published literature. Nevertheless, if a sex-differentiated effect of physical attractiveness or earning potential were to be accompanied by highly powered replications (and, ideally, preregistered analysis plans), we would find it persuasive even if the moderational pattern were complex.

Meltzer, A. L., McNulty, J. K., Jackson, G. L., & Karney, B. R. (2014). Sex differences in the implications of partner physical attractiveness for the trajectory of marital satisfaction. Journal of personality and social psychology, 106, 418-428.

• What this paper shows: The effect of a partner’s attractiveness on relationship satisfaction is more positive for men (r = .10) than for women (r = .05), p = .046.

• Why we don’t think you should be convinced (yet): We published a commentary on this article in which we argued that this sex difference does not hold up meta-analytically. In response, Meltzer and colleagues argued that only their dataset offers an appropriate test of the sex differentiated hypothesis for physical attractiveness because (a) their female participants were of child-bearing age, (b) they examined long-term relationships (i.e., marriages), (c) they used third-party coder ratings of physical attractiveness, (d) they controlled for confounding factors (e.g., income) and participants’ own attractiveness. In our commentary, we conducted a replication of their analysis that precisely matched these factors in an independent dataset, but failed to replicate their effect. No new replications of this effect have emerged in the four years since.

• What would be convincing: Bear in mind that these authors argue against the usefulness of our meta-analysis by suggesting that the sex difference only emerges in one cell of what would be a 16-cell design – the “young-age-long-term-relationship-third-party-coder-ratings-control-variables-accounted-for” cell. A design large enough to detect the effect size difference between r = .10 and r = -.05 in one cell of a 16-cell design would require nearly 100,000 participants to achieve 80% power. A design this large, if conducted in exactly the way that Meltzer et al. (2014) describe, would be persuasive if it documented similar effect sizes. This might be impossible, so perhaps a highly powered design with a preregistered analysis plan that tested 2 of the 16 cells would be somewhat persuasive. Based on our reading of the literature, our sense is that Melzer et al.’s (2014) sex difference is probably a false positive, but we would be willing to update our beliefs if it were to emerge again in rigorous follow-up studies.

Li, N. P., Yong, J. C., Tov, W., Sng, O., Fletcher, G. J., Valentine, K. A., ... & Balliet, D. (2013). Mate preferences do predict attraction and choices in the early stages of mate selection. Journal of Personality and Social Psychology, 105, 757-776

• What this paper shows: There is a large sex difference in the effect of physical attractiveness on romantic interest when participants meet and evaluate two opposite-sex potential partners (one “low attractiveness” M = ~2.4 on a 7-point scale, and one “moderate attractiveness,” M = 4.4 on a 7-point scale). Also, there is a large sex difference in the effect of earning potential on romantic interest when participants meet and evaluate two opposite-sex potential partners (one in a low status job, and one college student). The authors suggest that sex differences in the association of attractiveness/earning potential with romantic interest are especially pronounced in the low-to-moderate range of these attributes.

• Why we don’t think you should be convinced (yet): We discuss some of the inconclusive elements of this paper here on p. 678. The most troubling issue is that the sample sizes for these studies are extremely small: The physical attractiveness study used N = 8 targets (n = 2 per condition), and the earning potential study used N = 24 targets (n = 6 per condition). In the past, psychologists often mistakenly ignored sampling concerns when it came to sampling targets (Wells & Windschitl, 1999). New statistical conventions have highlighted how designs with small numbers of targets are just as problematic for statistical inference as designs with small numbers of participants (Westfall, Judd, & Kenny, 2015).

• What would be convincing: We would be persuaded by replications with sample sizes that follow modern conventions (e.g., n = 40 or 50 per cell). Also, we would be more persuaded if the “low” and “moderate” attractiveness targets were selected using percentiles (e.g., men and women between the 5th and 10th percentile on attractiveness) rather than numerical matching (e.g., men and women both scoring ~2.5 on a 7-point scale). Women tend to be rated as more attractive than men on average, and so numerical comparisons like these are somewhat ambiguous (i.e., you might be comparing a 35th percentile man with a 17th percentile woman, which is what Li et al. did, see p. 678 here). This logic also extends to the earning potential study.